Posted on January 10, 2013 by Thomas Pesquet

Capsule Resource Management

A pie and a pint. A pint of British ale, to be specific, lukewarm and flat as it’s supposed to be, and served in a cosy english pub, sitting close enough to the fireplace to let the flames warm me up a little bit from the chilly outside temperature (after all, it’s May in Cheshire, so I can be happy with 8 Celsius). What does it have to do with CRM? Everything. I will explain. But what on earth is CRM, to start with?

Don’t worry, I won’t start a theoretical lesson here, and I will mention beer again, just to keep your attention up…

CRM has numerous meanings, from Certified Risk Manager to Centre de Recherches Mathématiques or Cis-Regulatory Module, all the way through Courtesy Reply Mail, depending on your field of expertise.

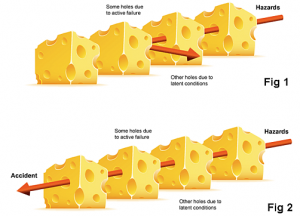

What we’re interested in, though, is Cockpit Resource Management, and its offspring Crew Resource Management. It was created in 1979, after people making statistics realized that human error played a role in almost every major airline accident, and deservedly so: according to James Reason’s “Swiss cheese model”, an accident in our modern complicated airliners’ world happens only when a great many different factors align, and when a risk manages somehow to go through all the layers of safety, the last and arguably most important of which being the pilots’ judgements and actions.

- As in: “usually the weather was always fine but today a strong dense fog was covering the airfield”.

- “and this plane is supposed to be equipped for handling low visibility but on this particular day this equipment had failed”

- “which wouldn’t have been a problem if controllers had been more at ease with the English language or equipped with surface movement radar, but this airfield was never meant to handle international flights, only domestic traffic”

- “that’s why on this day, when the city’s main airfield had closed just hours before, all flights had to divert to here, which made such a small airport unbelievably crowded and planes had to backtrack the runway, which you usually don’t do”

- “but all this wouldn’t have caused the accident if the pilots had second guessed what they thought was a take-off clearance”[1].

There you have it: all the holes aligned: weather + equipment + environment +

So yes, human error is always part of the chain of events that leads to accident, only because pilots, military or civilian, are the last layer of safety, the most versatile layer; they are put in a situation in which ultimately, even if everything else fails, they are given a chance, even a so slight one, to recover and save the day: they always are the last barrier.

And don’t get me wrong, you’re not reading a plea to give pilots the credit they deserve in our modern world where people now think they only push those two big buttons labelled “take-off” and “landing” and computers take care of the rest, but one has to realize that even if sometimes they just couldn’t take the action the situation required, in 99% of cases, this last layer effectively blocks those few risks that made it all the way to it, which of course the general public never hears about, specifically because on these days, nothing bad happens in the end.

So how to make this last layer as resilient as possible? Pilots technical skills are already highly trained, but NASA (interestingly enough) realized in 1979 that it was not only about technical skills: communication was a major factor, and so were leadership, teamwork, decision making, situation awareness, fatigue management and resistance to stress.

Universities picked up on this new field of research, and very soon CRM was part of every aircrew training curriculum, first in the US, then worldwide. Tools like structured briefings, to pass information on and always keep track of the situation while sharing a same project of action as a crew, appeared. Failures or unexpected events started to be treated in such a way that every crew member would be given the chance to express his/her opinion, no matter what the gradient of authority and of experience was in the cockpit. Junior officers were now encouraged to speak up, and airlines made sure they wouldn’t be blamed for disagreeing with their senior officers. Methods for efficient decision making in time-constrained situations were designed. And so on. And to no one’s surprise, the airline business became safer and safer[2].

So it’s all very well, and I’m sure that, as an occasional passenger, you’re very happy with this, but what does it have to do with a pie and a pint… and with human spaceflight?

As CRM was now a well-established part of aircrew members training, it started to spread into other high-risk, complex environment activities. Firemen took up the torch. The nuclear industry became an avid user of CRM’s methods and tools. Oil rigs’ safety procedures are heavily impregnated with CRM. There is today a trend in medical surgery to use more and more of those techniques. And so on. And flying in space, as the natural extension of flying in the air, couldn’t ignore the trend any longer.

Our missions are today longer and longer. The standard is 6 months in the ISS, the future will without a doubt see even longer interplanetary missions. You cannot afford to have poor communications, biased decision making, low situation awareness or bad teamwork etc., for such long missions in such harsh environments. Whatever “bad” behaviours you can get away with for a one hour-long flight, don’t even dream about extending them to a space mission.

So today, we select the crew according to their psychological predispositions to teamwork, we test their decision-making, leadership and communications, and we train them. In the simulator, in the classroom, underwater, in isolation in Antarctica, in the wild, in the Russian snow, or in Sardinian caves. They will, we will, have to face the unexpected. We will have to react as a team. It doesn’t matter who is the smartest or the quickest: the output of the entire team cannot be greater than its slowest member’s. As it grew wider in range and acceptation, CRM went from Cockpit Resource Management to Crew Resource Management then to Company Resource Management… it is now becoming Capsule Resource Management!

CAVES 2011: also check CAVES 2012 and NEEMO on Youtube

And that’s exactly why I was in Cheshire, of all places: being already qualified as a Type-Rating Instructor in the aviation world, I was being trained there as a CRM instructor, together with pilots from all over the world, military or civilian, fixed wings or rotary, to further increase my exposure to these concepts, and to help spread the word into the space business. After all, CRM originated in civilian air transportation, so it’s only fitting that an ex-commercial pilot should do his part. And during my stay in this lovely corner of England, as Andy was preparing for going underground for CAVES, as Samantha was gearing up for NOLS, Tim was underwater 10 miles off the coast of Florida for NEEMO. It just shows how much emphasis is now put on CRM and HBP (Human Behaviour and Performance) for space crews training nowadays. And as Tim had been in this hot and damp environment for quite a while with his crewmates, with only canned food and warm coke in their underwater habitat, I took the picture shown at the beginning of the article, and sent him. For sure communication is part of CRM, and rightly so, but one could argue that a little motivation doesn’t do any harm…[3]

[1] Any resemblance to an actual air disaster is not purely coincidental: see the Tenerife disaster. No equipment failure was involved in the real accident, it is added here in the discussion only to illustrate James Reason’ Swiss cheese model.

[2] Of course, technology also greatly contributed to air transportation’s safety

[3] Right after receiving this picture while living under the sea, Tim got back at me (and at Andy) in great fashion: see how.